In a recent episode of the WAB Podcast we focused on how we are adapting to AI in education, featuring a special AI guest…

In this episode, aimed at parents and educators, we discussed the development, opportunities and implications of AI in our school and other schools. It draws heavily on my other posts here, and shares our resources on AI, including a special parent page that outlines our position as a school.

The AI Guest

The first ‘guest’ on the show is an AI version of me, using a cloned voice reading GPT-generated text. This was a fun experiment, and something we’d planned to do since before ChatGPT was released, but at that time we wanted to focus on more learning episodes from around the school.

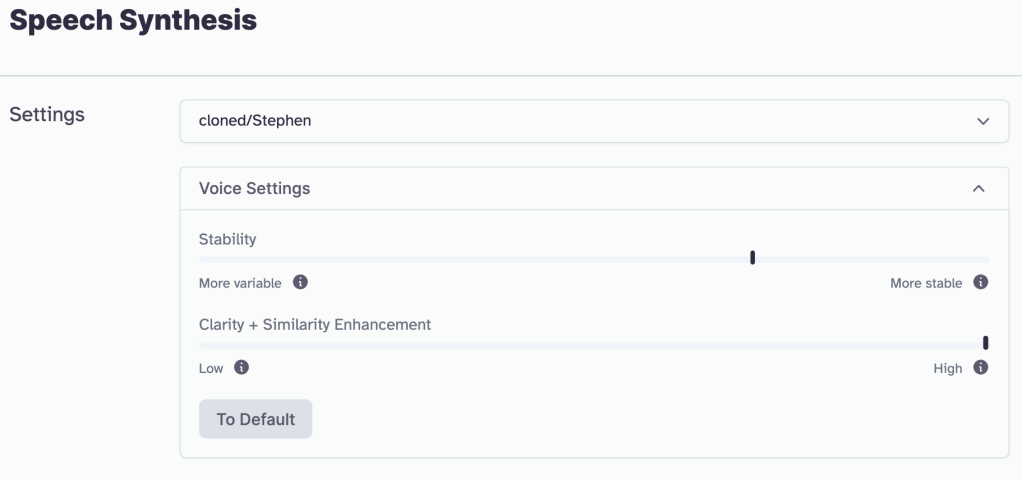

ElevenLabsIO’s (beta) Speech Synthesis tool became popular in the meantime, proving easy to use and ideal for the demonstration.

To get set up, I cloned my voice in the Voice Lab. This only needs about 60 seconds of sample audio. In the original idea for this episode, I was going to use Descript’s voice cloning tool but it needed ten minutes of sample data. It took a few attempts to get it sounding more like me; my first attempt was too wooden and nervous, so the results weren’t great. The best result was when I was relaxed and reading a passage (about 90secs), that covered all different punctuations and a more natural, upbeat tone.

The text spoken by the guest was written using Craft Docs AI Assistant, with a couple of prompts. Some light editing to wording replaced some generic text and replaced it with things more like I’d say. It also used “AI” many times, which sounds pretty irritating in audio, so I switched a few out.

To synthesise the speech, I pasted the generated text into Speech Synthesis, and tinkered with the settings. The first attempt came out pretty newsreadery, so I needed to try again. I found the best settings were to go max Clarity & Similarity Enhancement and to move to about 2/3 Stability, for more variance. It still sounds a bit posher than me, but it is very impressive.

You can hear the result at the start of the episode, or right here for just the 2-min clip.

Thinking Forwards

If you want to have a go, start with the Education section of their site, for guidance on how to do it safely and with good results. They have helpful content on digital rights as well.

This is a pretty dramatic example of AI tools in action – it is quick, easy and effective. It raises ethical questions about deepfakes and misuse, and there is some discussion on how well it can (currently) handle accents. My own is unusual, but mostly British, and experienced some poshening. I think the beta demo I used is optimised for American accents.

How might it handle more diverse accents? It is early days for this tech and I hope there are works in progress to ensure that once it is out of beta, it can authentically represent the voice of anyone who uses it, without having to erase or modify their own accent.

In the case of this podcast episode, focused on an educational demo of AI developments, I would only consider cloning my own voice, and not risking the voice or reputation of anyone else. However, it seems some ‘bad actors’ have misused ElevenLabs, and here is their response (thread):

The potential for voice-cloning AI tools is high in terms of recording worthwhile voice-overs and audio content. I imagine a near-future where this can be used effectively as an adaptive technology in education (and beyond), giving a realistic and representative voice to anyone. For now it is still early days, so use it with caution and, of course, be responsible.

Thank-you for your comments.