Science Teaching

-

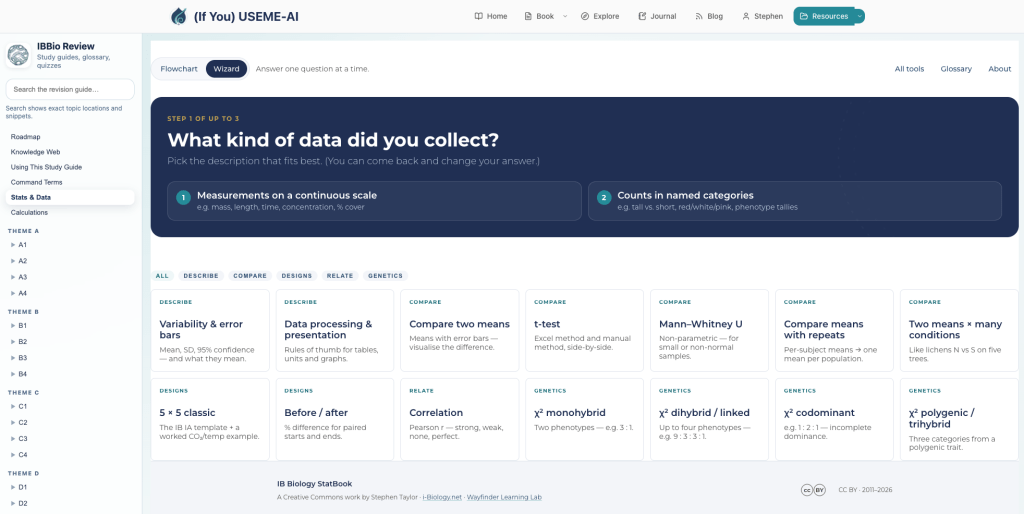

IB Biology Statbook 2026

Updating a 15 year-old resource as an interactive web app for students and teachers. Continue reading

-

Project Hail Mary

A film of optimism and agency with a decent emotional core. Continue reading

-

IBDP Maths & Science Practice Questions

Making practice paper questions for calculations in Poe (and an experiment in Claude Code). Continue reading

-

AI Impact Estimator 2026

Updating the AI Environmental Footprint Estimator for 2026 with new data and features. Continue reading

-

Making Feedback Visible: Four Levels in Action

Five years ago I was starting to become concerned with the difference between marking and feedback. What was making a difference to my students’ learning and was the effort I was putting into detailed marking worth it in terms of… Continue reading

-

The Gradebook I Want

How can we develop a system that effectively combines standards-based grading practices with a balance between tracking MYP objectives and content-level standards in parallel? Continue reading

-

Give a Student a Fish…

“Give a man a fish and he’ll eat for a day. Teach him how to fish and he’ll feed his family for a lifetime.” Anne Ritchie, 1885 (maybe) This short post, again related to Understanding Learners and Learning, Visible Learning… Continue reading

-

First Unit Reflections: Is It Working?

Summary of student feedback on the unit & teaching. Continue reading

-

Using Student Learning Data to (try to) Improve Student Data Processing: A Department Goal

This year saw the start of a school-wide push to become more data-driven in our decision-making and evaluation. As a result, one of our goals as teachers at the start of the year was the Student Learning Goal, a target… Continue reading

-

Using personal GoogleSites for learning, assessment & feedback in #IBBio

This is reposted from my i-Biology.net blog. To comment, please go there. ……….o0O0o………. Over the last two years, My IB Bio class have been keeping individual GoogleSites as records and reflections of their learning. Based on this experience and their… Continue reading

-

Teachers as Researchers & Engaging in Academics

We need to get over ourselves and get involved in education. Continue reading

-

How Twitter shook my confidence as a teacher (and why that’s a good thing)

I’ve been a Twitter user for about a year and a half. That’s late to the party, I know, but at first I was skeptical. It seemed a time-suck and a frivolity: what could be worth saying in 140 characters?… Continue reading

-

Curriculum Studies Assignment: Physics & the MYP

With permission from my tutor, here is my Curriculum Studies assignment: “A critical review of a Grade 10 Introductory Physics course as part of the International Baccalaureate Middle Years Programme, examining selected aims and purposes and analyzing the extent to… Continue reading

-

Differentiation through a ‘Readiness Filter’?

Carrying on from my last reflection on the differentiation workshops here this week… Some subjects have a great freedom of curriculum and are natural fits for student-driven inquiry all the way through to MYP 5 (and beyond if they exist… Continue reading

-

Time to Think

If we want to push students beyond merely procedural tasks and rote learning, we need to give them enough time to think. I know I sometimes feel that I’m not earning my keep if I’m not actively engaged with each… Continue reading