“We are the Borg. Lower your shields and surrender your ships. We will add your biological and technological distinctiveness to our own. Your culture will adapt to service us. Resistance is futile.” Star Trek.

How can we protect thinking, memory and human learning when the machines can do it “for us”? This is a (very adapted) excerpt from Chapter 2: Understanding Learners, Learning & AI, from my upcoming book, (If You) USEME-AI: Learning for Hope & Agency in an AI World. It pulls in some sub-sections on thinking, memory, load, System 1 & 2 thinking and shares some new papers on proposed System 0 and System 3.

Cognitive What?

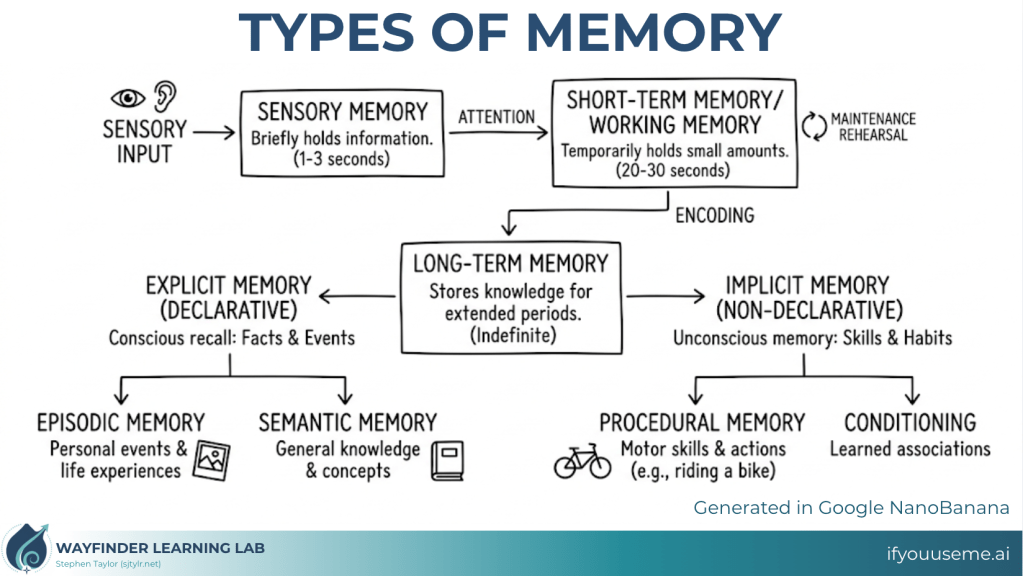

Making learning stick means making memory work, relying on neuroplasticity: the mechanism for “supporting long-term memory formation, adaptation and stability”1. Our brains are shaped by experience, reflection, recall and application. As we encounter new stimulus, our limited short-term/working memory processes it, and through rehearsal and maintenance, it can be encoded to long-term memory, available to access later2. Some of this long-term memory is implicit, or non-declarative (unconscious skills and habits), and some is explicit, or declarative (episodic or semantic events, experiences, knowledge and concepts). Either way: use it or lose it.

New learning builds on the learning that has come before and requires effort. If our students are putting the right types of effort into their thinking, recall and application, they are building their learning, making connections and developing the capacity for yet more learning.

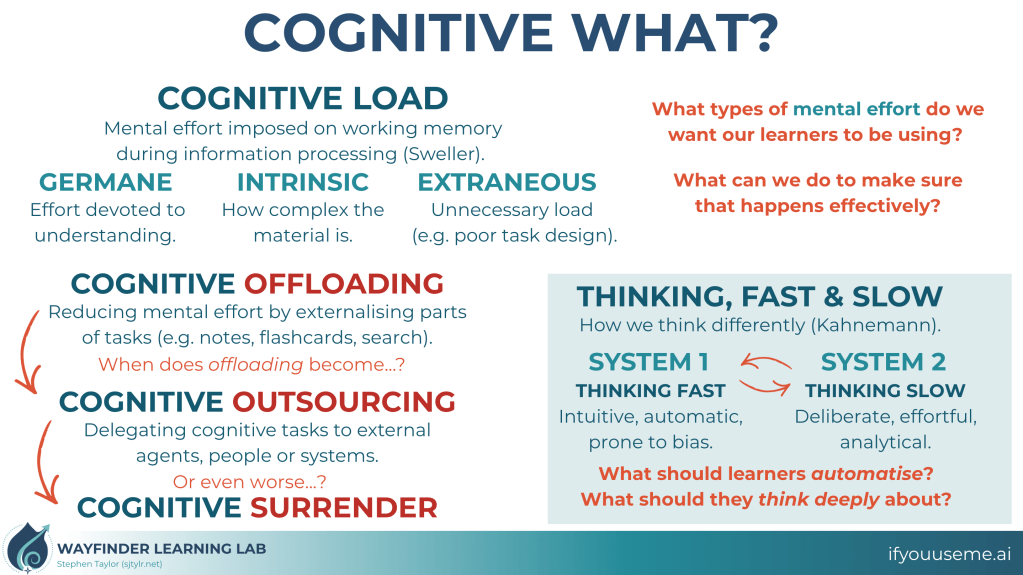

Sweller’s work on cognitive load theory3 is a useful frame for thinking about the effort that our brain uses. Where cognitive load represents the total mental load of processing information, it can be broken into three sub-types: germane (the load dedicated to understanding), intrinsic (the complexity of the target material), and extraneous (the unnecessary load that can come from poor task design, distractions or decision fatigue). As teachers, being aware of the load we intentionally and unintentionally place on students can make a real difference to what really sticks in the long-term. How many extraneous decisions do they need to make to access the learning? What are we assuming they can do in order to do what they need to do, but they can’t do? Learning for automaticity at lower levels of recall and application protects cognitive load for deeper thinking, connection and investigation. Planning intentionally for deep thinking about the right things, and making high expectations for real thinking clear, sets the stage for meaningful inquiry.

Cognitive offloading is the use of external aids to reduce the mental effort required for a task4,5. We have always done this: writing notes, using calculators, bookmarking pages. But AI takes it to another level. When a student can get a fully formed essay, a solved maths problem or a complete lab report from a simple prompt, the cognitive work that would have built understanding, memory and transferable skill is bypassed entirely. When the task gets “one-shotted”, we move from cognitive offloading to cognitive outsourcing, bypassing the learning entirely. So we really need to think about thinking.

Thinking, Fast and Slow… and Non-Human?

In Thinking, Fast and Slow6, Daniel Kahnemann describes two systems: System 1 fast thinking (learning that has been automatised and comes intuitively, but which is prone to bias) and System 2 slow thinking (new cognitive “work” that is deliberate, effortful and analytical). When we think about our learners’ thinking, what do we want them to be able to recall and apply automatically and what do we need them to be thinking deeply about? Kahnemann’s ideas have been transposed to descriptions of how AI works, from intuition to deliberation7. AI is not a human brain, but it can learn and function in ways that make for neat analogies. In the current wave of reasoning models, being able to articulate our questions and negotiate outputs through clarity and domain-specific language could be genuine learning superpowers at the cognitive level.

When we consider all the complexities of the brain, thinking and memory, we might consider one guiding question: What do we need to make routine so that students can access higher-order thinking, and what are we going to do about it?

More recently, Milena Stepanova8 has proposed that a “System 3”, drawing from emotional and affective connections to systems 1 and 2 is added; an interesting proposition as we engage with (and try to distinguish ourselves from) the machines. What happens when we lose the emotional connection to our learning, or the motivation to keep thinking deeply, when we know the machines can do it for us?

So what if the machines are already affecting our thinking and not the reverse?

A recent paper by Francesco Branda suggests that this is already happening10, with AI acting as a “nonbiological cognitive layer that precedes and modulates human intuitive and reflective thinking”. Building on Chiriatti et al’s proposition that human-AI interaction represents a new “System 0” thinking11, which “interacts with and augments both intuitive and analytical thinking processes”, there is the risk that AI-mediated processes and information don’t just help us offload tasks, they outsource human agency and accountability. As we move further into an era of an “expanded mind” 11, Branda suggests that “Thinkframes” 12 are already forming, creating extensive networks of “pervasive cognitive architectures” that might fundamentally shift our relationships with knowledge, attention and decision-making at a social scale.

You might already feel the influence of AI on your own thinking. I used to love an em-dash and various other language moves that are now “indicators” of AI-writing, and it has changed the way I write (and worry about writing)12. It makes logical sense: as existing, effective writing structures are swallowed up into the blob of training data, they are spit back out at us with AI outputs. But it doesn’t feel great. AI-related terms are entering the culture, and the homogenising effects of LLMs may be impacting voice13,14 and ideation15,16, squeezing thinking to a mediocre middle. We are handing over our bodies and our homes to smart-tech and AI wearables, forming relationships with our augmented selves and striving to “close the loop”17.

Interestingly, Shaw & Nave at Wharton have also proposed a new System 3, “artificial cognition that operates outside the brain”18, (closer to Chiriatti’s System 0 than Stepanova’s System 3), and which coins the term “cognitive surrender” as a step beyond outsourcing. They test a “cognitive reflection test” which engages (their) System 3: participants were allowed to consult AI, but some was seeded as correct and some incorrect. Accurate AI boosted learner accuracy; inaccurate AI reduced it. Either way, “participants with higher trust in AI and lower need for cognition and fluid intelligence showed greater surrender to System 3”. If it’s there, they’ll use it.

Let’s let the academics decide who gets to call what system what. Maybe they can give all their research to Claude and it can work it out 😉 But for us: as Systems 1 & 2 form the cognitive layers of our thinking, how are we going to protect what’s important for students to be able to know and do with fluency, and think about deeply? With the tendrils of Chiriatti’s System 0 (or Shaw & Nave’s System 3?) potentially shifting us to a human-AI hybrid culture, how might we lean on the emotional and affective layers of Stepanova’s System 3 to really highlight what makes us human?

We need to create strong Cultures of Thinking in our responses to AI that prioritise the human-human interactions that drive deeper learning and connections. When we engage with the machines, make it worthwhile… then get outside.

We are the Borg (in the making).

Or are we?

References

- Science Direct. 2025. Long Term Memory.

- Atkinson RC, Shiffrin RM. 1968. Human memory: a proposed system and its control processes. The Psychology of Learning and Motivation. 2:89–195.

- Sweller J, Ayres P, Kalyuga S. 2011. Cognitive Load Theory. Springer Nature.

- Risko EF, Gilbert SJ. 2016. Cognitive offloading. Trends in Cognitive Sciences. 20(9):676–688.

- Gerlich M. 2025. AI tools in society: Impacts on cognitive offloading and the future of critical thinking. Societies.

- Kahneman D. 2012. Thinking, Fast and Slow. London: Penguin Books.

- Geordie. 2025. Silicon Intuition, Silicon Deliberation: How Modern AI Mirrors Kahneman’s Dual Process Theory. Applied Relevance.

- Stepanova M. 2026. System 3 Thinking: A conceptual expansion of the dual process model for complex human decisions. International Research Journal of Multidisciplinary Scope. 7(1):864–873.

- Branda F. 2026. When Artificial Intelligence Shapes the way we Think. Philosophy & Technology [Online], 39(1).

- Chiriatti M, Ganapini M, Panai E, Ubiali M and Riva G. 2024. The case for human-AI interaction as System 0 Thinking. Nature Human Behaviour [Online], 8(10), pp.1829–1830.

- Cassinadri G. 2026. Thinkframes as AI-mediated cognitive ecologies: Cognitive trade-offs in the AI age. Philosophy & Technology [Online], 39(1), p.38.

- Masnick M. 2026. We’re Training Students To Write Worse To Prove They’re Not Robots, And It’s Pushing Them To Use More AI. TechDirt.

- Agarwal D, Naaman M, Vashistha A. 2025. AI suggestions homogenize writing toward Western styles and diminish cultural nuances. Proceedings of the 2025 CHI Conference on Human Factors in Computing Systems. New York.

- Werdiningsih I, Marzuki, Rusdin D. 2024. Balancing AI and authenticity: EFL students’ experiences with ChatGPT in academic writing. Cogent Arts & Humanities. 11(1):23502918.

- Anderson BR, Shah JH, Kreminski M. 2024. Homogenization effects of large language models on human creative ideation. Creativity and Cognition. New York: ACM; p. 413–425.

- Moon K, Green A, Kushlev K. 2025. Homogenizing effect of a large language model (LLM) on creative diversity: An empirical comparison of human and ChatGPT writing. PsyArXiv.

- Buckley, M., 2025. Becoming the Borg: how wearables quietly assimilate the self. Medium [Online].

- Shaw SD, Nave G. 2026. Thinking—Fast, Slow, and Artificial: How AI is Reshaping Human Reasoning and the Rise of Cognitive Surrender. SSRN.

Thank-you for your comments.